The Linux kernel project has spent quite some time navigating the use of AI tools, and the response usually has been somewhere between “figure it out yourself” and “we’ll get back to you.”

Late last year, at the 2025 Maintainers Summit, Sasha Levin pushed for some documented consensus, and what came out of it was human accountability for patches being non-negotiable, purely machine-generated submissions not being welcome, and tool use being disclosed.

He promised to put something in writing without committing to enforce it, and that work has now shipped with Linux 7.0.

What is it?

Linux’s AI coding assistants policy.

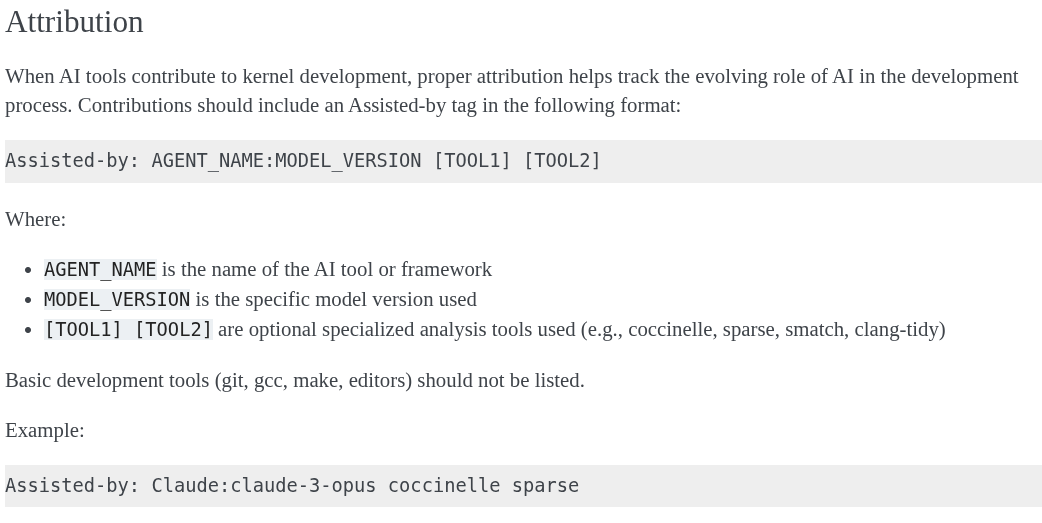

The new document is called AI Coding Assistants and lives in the kernel’s process docs alongside the rest of the contribution guidelines. The short version is that AI-assisted contributions still need to comply with GPL-2.0-only; AI agents cannot add Signed-off-by tags; and patches that had AI help should carry an “Assisted-by” tag.

The Developer Certificate of Origin (DCO) is an important aspect that exists, so there is a human accountable for every patch. AI assistance does not change that hard requirement.

Basically, the human submitter reviews everything the AI produced, confirms it meets licensing requirements, and puts their own name on it with an appropriate mention that AI was used.

The Assisted-by tag format is Assisted-by: AGENT_NAME:MODEL_VERSION [TOOL1] [TOOL2]; for scenarios where either single or multiple tools were used. The document gives Assisted-by: Claude:claude-3-opus coccinelle sparse as an example.

Back then, Linus was not even convinced a dedicated tag was necessary and suggested the changelog body would do the job. But now, the kernel community seems to have settled on the tag anyway.

It’s already in use

We covered this earlier in the week, but Greg Kroah-Hartman (GKH) seems to have had AI-assisted fuzzing running in his kernel tree for a while now, in a branch called “clanker.” He started with the ksmbd and SMB code, found some potential issues, and submitted fixes with a note telling reviewers to verify everything independently before trusting any of it.

That is just about the workflow the new policy was written around. AI surfaces issues, a human with decades of kernel experience decides what is real, writes the fix, and takes responsibility. GKH being the one doing it is not a surprise given he is the stable kernel maintainer and has probably dealt with more bad patches than the others.

Other projects have gone in a different direction. Gentoo banned AI-generated contributions entirely in 2024, with its council citing copyright risk, code quality, and ethical concerns.

NetBSD’s commit guidelines put LLM-generated code in the “tainted code” category, requiring written approval from the core developers before any of it goes in.

In contrast, Linux is not banning anything. Whether that turns out to be the sensible call or just a lenient one will depend on how seriously people actually take the “a human reviewed this” part.

Suggested Read 📖: Is a Clanker Being Used in Linux Development?

![]()

![Fading Tokyo – Horikiri Station, the Arakawa River, and Kinpachi-sensei[Walking course]](https://soranews24.com/wp-content/uploads/sites/3/2026/04/ft-16.jpg)